eLearning Solutions to Mitigate Unconscious Hiring Bias

The Hiring Bias

In study after study, the hiring process has been proven biased and unfair, with sexism, racism, ageism, and other inherently extraneous factors playing a malevolent role. Instead of skills or experience-based recruiting, it is often the case that interviewees get the nod for reasons that have little to do with the attributes they bring to an employer.

“This causes us to make decisions in favor of one person or group to the detriment of others,” says Francesca Gino, Harvard School of Business professor describing the consequences in the workplace. “This can stymie diversity, recruiting, promotion, and retention efforts.”

Companies that adhere to principles of impartial and non-biased behavior and that want to increase workforce diversity are already hard-pressed to hire the best talent in the nation’s current environment of full-employment and staff scarcity.

Five Main Grounds for Hiring Bias

Researchers have identified a dozen or so hiring biases, starting with a recruiting ad’s phrasing that emphasizes attributes such as “competitive” and “determined” that are associated with the male gender. In fact, study findings have reiterated that even seasoned HR recruiters often fall prey to faulty associations.

Here are five of the most frequently cited reasons for the unintended bias in the hiring process:

- Confirmation Bias: Instead of proceeding with all the traditional aspects of an interview, interviewers often make up their minds in the first few minutes of talking with a candidate. The rest of the interview is then conducted in a manner to simply confirm their initial impressions.

- Expectation Anchor: In this case, interviewers get fixated on one attribute that the interviewee possess at the expense of what backgrounds and skills other applicants can bring to the interview process.

- Availability Heuristic: Although this may sound somewhat technical, all it means is that the interviewer’s judgmental attitude takes over. Examples might be the applicant’s height or weight, or something as mundane as his or her name, reminding the interviewer of someone else.

- Intuition-Based Bias: This applies to interviewers who pass judgment based on their “gut feeling” or “sixth sense”. Instead of evaluating the candidate’s achievements, this depends solely on the interviewer’s frame of mind and his or her own prejudices.

- Confirmation Bias: When the interviewer has preconceptions on significant aspects of what an applicant ought to offer, everything else gets blotted out. This often occurs when, within the first few minutes of talking with an applicant, the interviewer decides in his or her favor at the expense of everything else that other candidates may have to offer.

Why Bias Is a Problem

In a book titled The Difference: How the Power of Diversity Creates Better groups, Firms, Schools and Societies, Scot E. Page, professor of Complex Systems, Political Science and Economies at the University of Michigan, employs scientific models and corporate backgrounds to demonstrate how diversity in staffing leads to organizational advantages.

Despite the mountain of evidence, the fact remains that many fast-growing companies are still not deliberate enough in their recruiting practices, often times ending up allowing unconscious biases to permeate in their methods.

Diversity in hiring, an oft-used term, is essentially a reflection on different ways of thinking rather than on other biases. For example, a group of think-alike employees might have gotten stuck on a problem that a more diverse team might have tackled successfully using diverse thinking angles.

Automated Solutions

Although hiring bias is normally shunned, this in no way implies that it doesn’t proliferate amidst large and small organizations alike. The tech industry—and Silicon Valley in particular—was shaken recently by accusations of bias in the workplace, driving many HR managers and C-Suite executives to look for “blind” hiring solutions.

To pave the way for a more diverse workforce—one that is built purely on merit—there is recruiting software built to systematize vetting and maintain each candidate’s anonymity. These packages enable companies to select candidates through a blind process. Instead of looking at an applicant’s resumé through the usual prism of schools, diplomas and past company employers, the first wave of screening can be done based purely on abilities and achievements.

Other packages also enable the employer to write blind recruiting ads, depicting job descriptions that do away with key phrases and words that are associated with a particular demographic—masculine-implied words such as “driven”, “adventurous”, or “independent”, and those that are feminine-coded such as “honest”, “loyal”, and “interpersonal”.

eLearning Case Studies

Companies are now attempting to make diversity and inclusion—from entry-level employees to the executive suite—hallmarks of their corporate culture. With an objective to identify and address unconscious bias in all processes and behaviors, companies can introduce unconscious bias training curriculum for first-line managers, by calling on eLearning companies for their eLearning courseware and content.

Confronting Hiring Bias in a Virtual Reality Environment

Virtual Reality (VR) technology can further boost unintended hiring bias. In a simulated setting, the user manipulates an avatar that was able to assume any number of demographics for applicants in the hiring process. Based on the gender or ethnicity of the avatar, the user experiences bias during question and answer sessions. The solution would use an immersive VR environment, a diverse collection of avatars, and sample scenarios to pinpoint to participants where bias is demonstrated and understood.

To Infinity and Beyond!

Vamsi Nekkanti looks at the future of data centers – in space and underwater

Data centers can now be found on land all over the world, and more are being built all the time. Because a lot of land is already being utilized for them, Microsoft is creating waves in the business by performing trials of enclosed data centers in the water.

They have already submitted a patent application for an Artificial Reef Data Center, an underwater cloud with a cooling system that employs the ocean as a large heat exchanger and intrusion detection for submerged data centers. So, with the possibility of an underwater cloud becoming a reality, is space the next-or final-frontier?

As the cost of developing and launching satellites continues to fall, the next big thing is combining IT (Information Technology) principles with satellite operations to provide data center services into Earth orbit and beyond.

Until recently, satellite hardware and software were inextricably linked and purpose-built for a single purpose. With the emergence of commercial-off-the-shelf processors, open standards software, and standardized hardware, firms may reuse orbiting satellites for multiple activities by simply downloading new software and sharing a single spacecraft by hosting hardware for two or more users.

This “Space as a Service” idea may be used to run multi-tenant hardware in a micro-colocation model or to provide virtual server capacity for computing “above the clouds.” Several space firms are incorporating micro-data centers into their designs, allowing them to analyze satellite imaging data or monitor dispersed sensors for Internet of Things (IoT) applications.

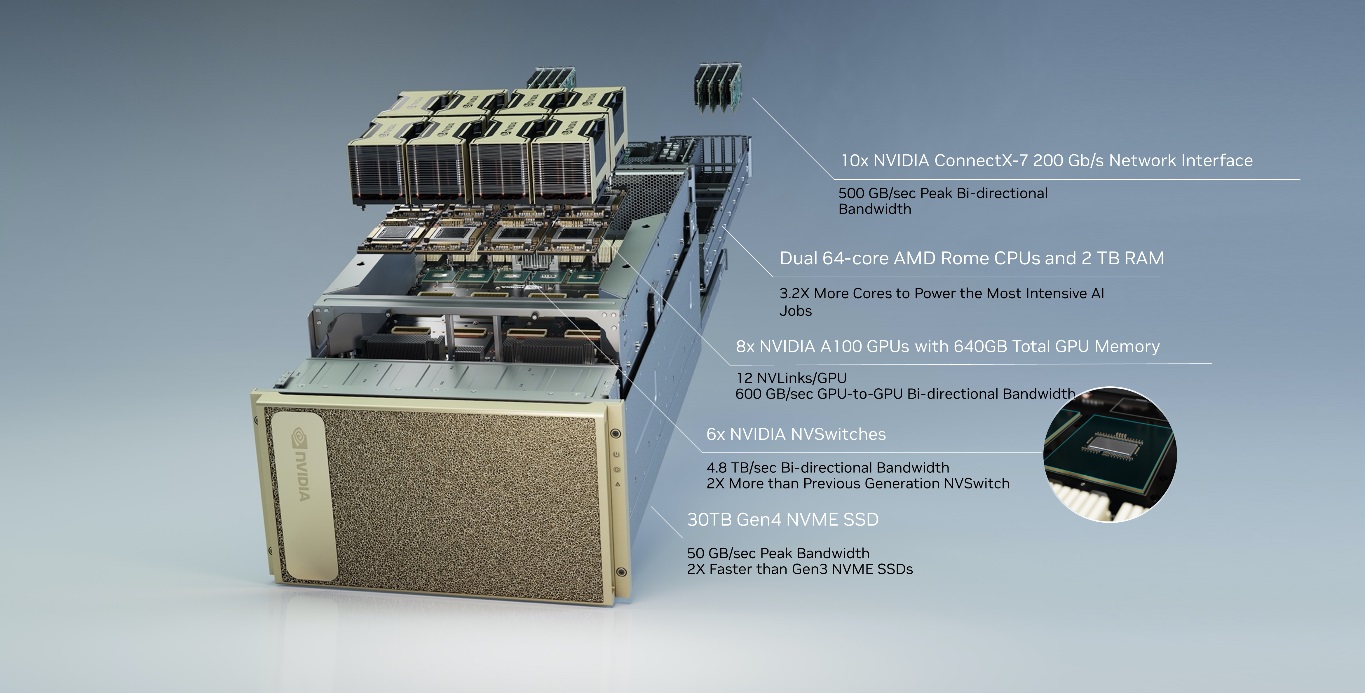

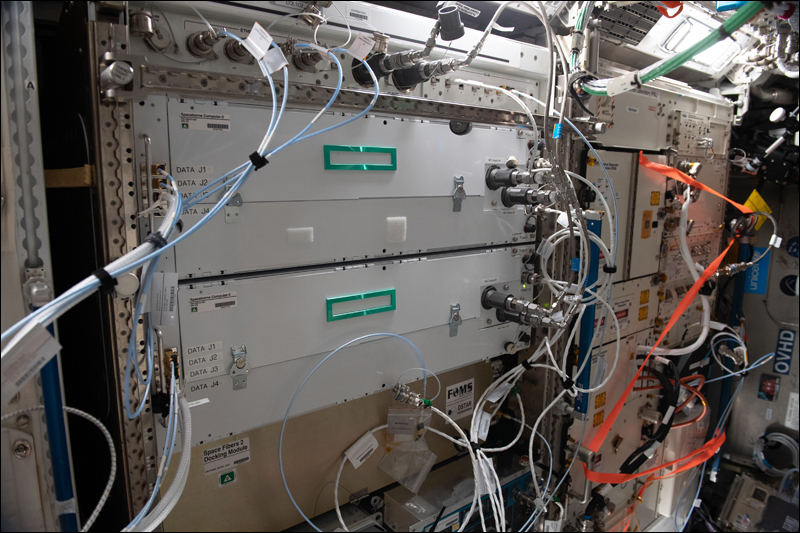

HPE Spaceborne Computer-2 (a set of HPE Edgeline Converged EL4000 Edge and HPE ProLiant machines, each with an Nvidia T4 GPU to support AI workloads) is the first commercial edge computing and AI solution installed on the International Space Station in the first half of 2021 (Image credit: NASA)

Advantages of Space Data Centers

The data center will collect satellite data, including images, and analyze it locally. Only valuable data is transmitted down to Earth, decreasing transmission costs, and slowing the rate at which critical data is sent down.

The data center might be powered by free, abundant solar radiation and cooled by the chilly emptiness of space. Outside of a solar flare or a meteorite, there would be a minimal probability of a natural calamity taking down the data center. Spinning disc drives would benefit from the space environment. The lack of gravity allows the drives to spin more freely, while the extreme cold in space helps the servers to handle more data without overheating.

Separately, the European Space Agency is collaborating with Intel and Ubotica on the PhiSat-1, a CubeSat with AI (Artificial Intelligence) computing aboard. LyteLoop, a start-up, seeks to cover the sky with light-based data storage satellites.

NTT and SKY Perfect JV want to begin commercial services in 2025 and have identified three primary potential prospects for the technology.

The first, a “space sensing project,” would develop an integrated space and earth sensing platform that will collect data from IoT terminals deployed throughout the world and deliver a service utilizing the world’s first low earth orbit satellite MIMO (Multiple Input Multiple Output) technology.

The space data center will be powered by NTT’s photonics-electronics convergence technology, which decreases satellite power consumption and has a stronger capacity to resist the detrimental effects of radiation in space.

Finally, the JV is looking into “beyond 5G/6G” applications to potentially offer ultra-wide, super-fast mobile connection from space.

The Challenge of Space-Based Data Centers

Of course, there is one major obstacle when it comes to space-based data centers. Unlike undersea data centers, which might theoretically be elevated or made accessible to humans, data centers launched into space would have to be completely maintenance-free. That is a significant obstacle to overcome because sending out IT astronauts for repair or maintenance missions is neither feasible nor cost-effective! Furthermore, many firms like to know exactly where their data is housed and to be able to visit a physical site where they can see their servers in action.

While there are some obvious benefits in terms of speed, there are also concerns associated with pushing data and computing power into orbit. In 2018, Capitol Technology University published an analysis of many unique threats to satellite operations, including geomagnetic storms that cripple electronics, space dust that turns to hot plasma when it reaches the spacecraft, and collisions with other objects in a similar orbit.

The concept of space-based data centers is intriguing, but for the time being-and until many problems are worked out-data centers will continue to dot the terrain and the ocean floor.

Elite Teams recover Systems from Failures in No time (MTTR)

Credits: Published by our strategic partner Kaiburr

Effective Teams in a right environment under Transformative Leadership by and large achieves goals all the time, innovates consistently, resolves issues or fixes problems quickly.

DevOps is to primarily improve Software Engineering practices, Culture, Processes and build effective teams to better serve and delight the Users of IT systems. DevOps focuses on productivity by Continuous Integration and Continuous Deployment (CI-CD) to effectively deliver services with speed and improve Systems Reliability.

The productivity of a System is higher with high performance teams and slower with low performance teams. High performance teams are more agile and highly reliable. We can have better insights on Team performance by measuring Metrics.

DORA (DevOps research and assessment) with their research on several thousands of software professionals across wide geographic regions had come up with their findings that the Elite, High performance, medium and low performance can be differentiated by just the four metrics on Speed and Stability.

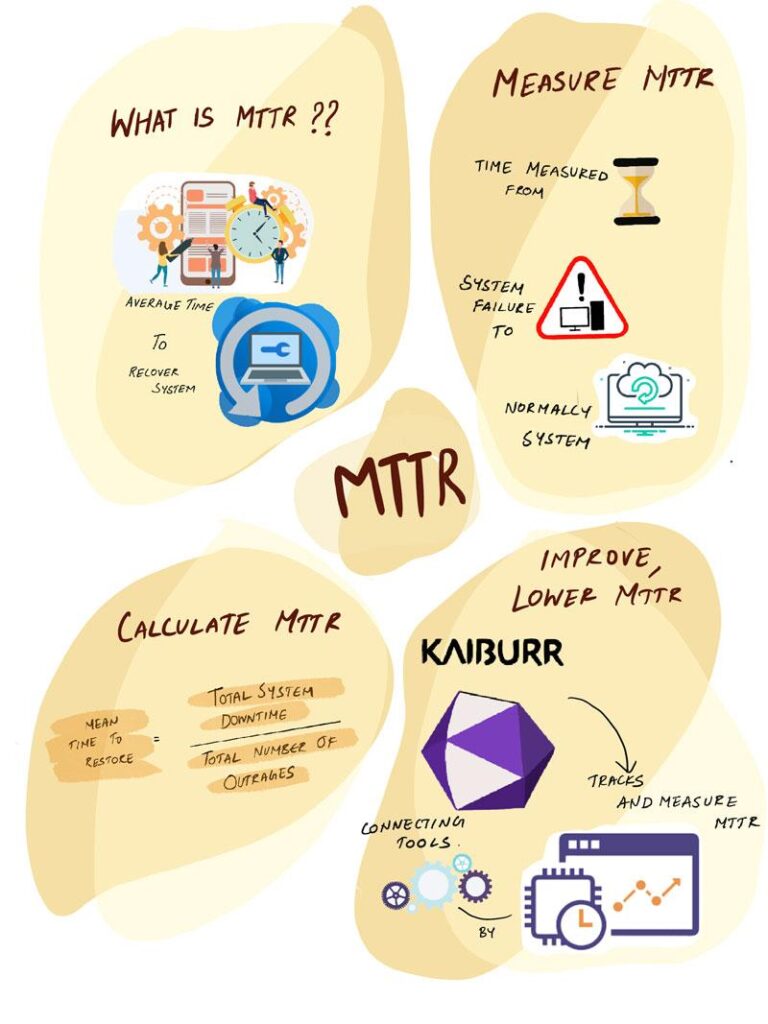

The metric ‘Mean time to restore(MTTR) ‘, is the average time to restore or recover the system to normalcy from any production failures. Improving on MTTR, Our teams become Elite and reduces the heavy cost of System downtime.

Measure MTTR

MTTR is the time measured from the moment the System fails to serve the Users or other Systems requests in the most expected way to the moment it is brought back to normalcy for the System’s intended response.

The failure of the System could be, because of semantic errors in the new features or new functions or Change requests deployed, memory or integration failures, malfunctioning of any physical components, network issues, External threats(hacks) or just the System Outage.

The failure of the running system against its intended purpose is always an unplanned incident and its restoration to normalcy in the least possible time depends on the team’s capability and its preparedness. Lower MTTR values are better and a higher MTTR value signifies an unstable system and also the team’s inability to diagnose the problem and provide a solution in less time.

MTTR doesn’t take into account the amount of time and resources the teams spend for their preparedness and the proactive measures but its lower value indirectly signifies teams strengths, efforts and Savings for the Organization. MTTR is a measure of team effectiveness.

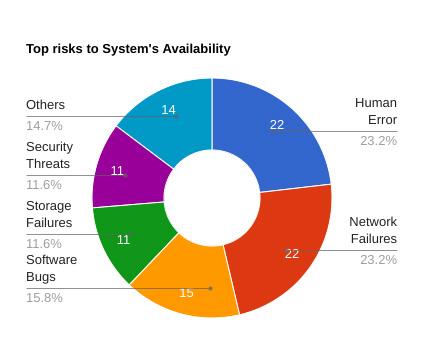

As per CIO insights, 73% say System downtimes cost their Organization more than $10000/day and the top risks to System availability are Human error, Network failures, Software Bugs, Storage failures and Security threats (hacks).

How to Calculate MTTR

We can use a simple formula to calculate MTTR.

MTTR, Mean time to restore = Total Systems downtime / total no. of Outages.

If the System is down for more time, MTTR is obviously high and it signifies the System might be newly deployed, complex, least understood or it is an unstable version. A system down for more time and more frequently causes Business disruptions and Users dissatisfaction. MTTR is affected by the team’s experience, skills and the tools they use. A highly experienced, right skilled team and the right tools they use helps in diagnosing the problem quickly and restoring it in less time. Low MTTR value signifies that the team is very effective in restoring the system quickly and that the team is highly motivated, collaborates well and is well led in a good cultured environment.

Well developed, elite teams are like the Ferrari F1 pit shop team, just in the blink of an eye with superb preparedness, great coordination and collaboration, they Change tyres, repairs the F1 Car and pushes it into the race. MTTR’s best analogy is the time measured from the moment the F1 Car comes into the pit shop till the moment it is released back onto the F1 track. All the productivity and Automation tools our DevOps teams use are like the tools the F1 pitstop team uses.

How to improve, lower MTTR

Going with the assumption that a System is stable and still the MTTR is considerably high then there is plenty of room for improvement. In the present times of AI, we have the right tools and DevOps practices to transform teams to high performance and Systems to lower MTTR. Reports of DORA says high performance teams are 96x faster with very low mean time to recover from downtime.

It seems they take very less time, just a few minutes to recover the System from failures than others who take several days. DevOps teams that had been using Automation tools had reduced their costs at least by 30% and lowered MTTR by 50%. The 2021 Devops report says 70% of IT organizations are stuck in the low to mid-level of DevOps evolution.

Kaiburr’s AllOps platform helps track and measure MTTR by connecting to tools like JIRA, ServiceNow, Azure Board, Rally. You can continuously improve your MTTR with near real time views like the following

You can also track and measure other KPIs, KRIs and metrics like Change Failure Rate, Lead Time for Changes, Deployment Frequency. Kaiburr helps software teams to measure themselves on 350+ KPIs and 600+ Best Practices so they can continuously improve every day.

Reach us at marketing@sifycorp.com to get started with metrics driven continuous improvement in your organization.

Credits: Published by our strategic partner Kaiburr

Visit DevSecOps – Sify Technologies to get valuable insights

AI and human existence: The ABCs of ADLs

Explained: How even the simplest of day-to-day activities are deeply influenced by ML & AI. Will it become a life-changer?

On any given day, a regular human being does several activities which are performed ritually and without fail. From cleaning to eating and drinking and finding our way across our environments, human life involves many activities that are deemed Activity of Daily Living, or ADL. We have performed these activities in the past without any technological help or advice. Throughout human history, man has invented many a tool to improve the execution of these ADLs, and our current age is no different. Technologies such as ‘Machine Learning’ and ‘Artificial Intelligence’ have enabled many advancements to our lifestyle. Some assist us, however there a few that we need to be cautious about.

Let us stride through these ADLs one by one by following a simple activity timeline of a person’s one-day life as we discover how Machine Learning is shaping human life.

Morning – 5 AM to 10 AM

A day for us typically starts with several activities. Brushing, eating, washing, etc., are a few morning routines that we follow in our day-to-day life.

A day begins by waking up from sleep. Nowadays smart devices such as wrist-fit bands, and smartwatches tell us more about our sleep. These devices take leverage of AI and Machine Learning to provide accurate results with improvement. They help us to improve our sleep quality and behavior which in turn improves our health.

After waking up, we essentially brush our teeth to maintain hygiene and dental wellness. Even in this activity with the help of novel smart wrist devices and smart toothbrushes which use AI and Machine Learning, we study and measure our hand movements, direction, speed, etc., that improve the quality of our brushing which in turns keep our oral health in check. An example of such an application is Oral B Genius X.

Nowadays, washing our hands regularly to maintain hygiene and immunity is very important especially given the COVID scenario around the globe. Many kinds of research have been made to take advantage of the technological development to help in monitoring hand hygiene and give a quality assessment to an individual. Many privatized hospitals have tied up with several industries to implement a smart solution for providing hand hygiene quality assessment. The doctors from these hospitals take advantage of it daily to improve their hygiene and their patients’ as well. An example of such an application is The MedSense Clear system by the MIT Medlab Alumni.

Physical health and maintaining shape have become very underrated due to the new awareness around mental health and its importance. Nevertheless, staying in shape is a very important aspect of people’s lives as it indirectly constitutes mental well-being. Diet planning and eating healthy is something that must be taken care of. With the help of smart mobile and computer applications, we nowadays plan our diet efficiently. With the rise of ‘Machine Learning’, this system is scrutinized, and further research is being conducted to find solutions for problems such as people’s preferences in their eating habits to provide an even better solution.

Mid-Day Activities – 10 AM to 3 PM

Mid-day activities constitute a very wide range of tasks. We regularly use map applications to commute to a certain location. These applications use AI and Machine Learning extensively to provide the best route possible by predicting traffic and other obstacles well before we commute. These applications suggest the best means of transport and the best route to take, they even track and alert us on breakdown of transport services. Examples of such applications are Google Maps, Apple Maps, etc.

Many people work in closed environments either in the office or at home. We are always sedentary, and desk bound. It becomes inevitable to take breaks and go for a short walk and stretch ourselves. And, equally important is to keep ourselves hydrated. With the help of smart devices, we can track the amount of time that we sit continuously. They help us to take a break or even correct our posture if required. Some also help us stay hydrated and suggest improvements based on the environment and atmosphere quality around us. Examples of such devices are Apple Watch, Mi Band, etc.

Evening Activities – 3 PM to 8 PM

Evenings are when we generally try to relax after work and indulge in leisure activities that differs from individual to individual. These activities involve a lot of AI and Machine Learning as it takes advantage of our data right from our preferences to our recent practices. These technology-driven applications and systems need to be handled with utmost caution. We all use the e-commerce facility extensively as it helps us to reduce the time and energy to buy a product as it enables us to shop from wherever we are. This has huge benefits. But we sometimes are ignorant and innocent about the implications that might come. Few applications read our technology usage colossally as they keep track of us more than we know. They improve their recommendation system using this data with the help of AI and Machine Learning and suggest products well before we want to search. If we are not using an official or a recognized application, we are at risk of a privacy breach with the history of our shopping data being stolen or hacked.

Social media applications have invaded our lives. We connect via different platforms to share, exchange ideas and also to relax. However, some of these applications hold sensitive data about us. These applications have plenty of recommendation systems that are constantly updated to feed posts enjoyable to us. But are we compromising on the sensitive data like our name, address, phone number, etc., as well as our preferences that reveal who we are while doing so? An imminent danger to watch out for here is ‘data leakage’. Some of these applications never encode or cipher our passwords. Other activities during the evening include working out, which a few people prefer in the morning too. With the prevailing COVID situation, we have restricted using the gym frequently. Many applications and systems have been created to assist us to work from home. With the help of AI and Machine Learning, they streamline our workout routine.

End Day Activities – 8 PM to 5 AM

End-day activities help us unwind as we call it a day. We perform certain activities as mentioned previously like eating, brushing, washing, etc. Some smart devices assist us by providing an alarm to indicate our sleeping time. This heavily depends upon technology as it tracks our previous sleep history to let us know in what areas we need to improve. These applications help us learn from our sleep pattern, like how much time we spent in deep sleep and so on. This system heavily uses AI and other sensors to read our breathing, heartbeat and measures those accurately to provide insights. Many wristwatches and fit bands provide this feature.

As we get to the end of it, these applications help us save energy and time as well as lead an enriching life.Having said that, we must also be cautious regarding their influence on us. Take precautions and double-check the application for privacy policies. Always use trusted applications, instead of randomly selecting one that might store unwanted cookies to store sensitive data which might lead to an imminent threat. So, what’s your ADL?

In case you missed:

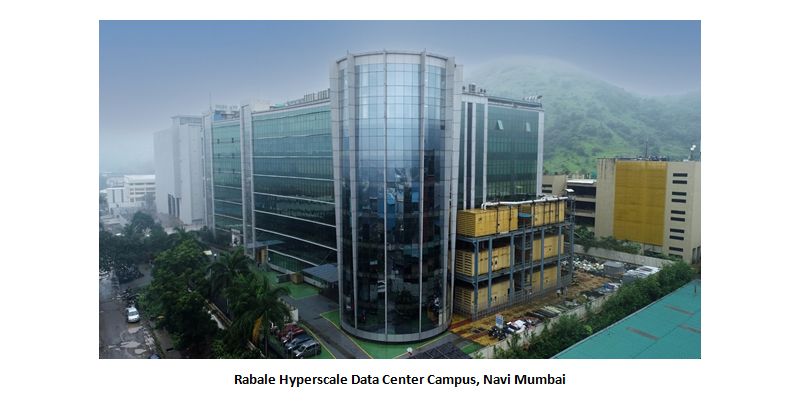

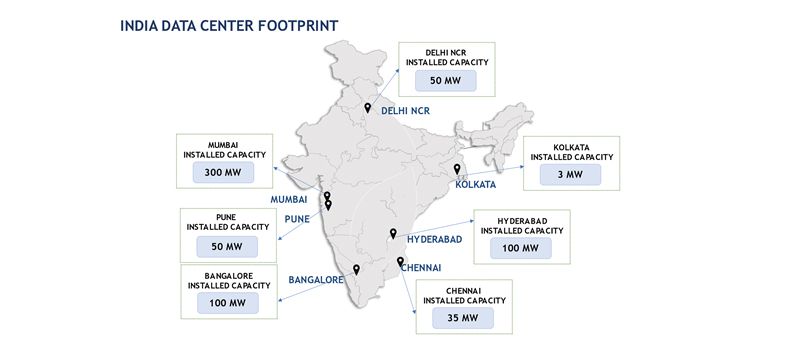

How Hyperscale Data Centers are reshaping India’s IT

In today’s times, a common question arises while discussing technology: what is the difference between Data Centers and Hyperscale Data Centers?

The answer: Data Centers are like hotels – the spaces are shared with multiple guests, whereas, in the case of Hyperscale Data Centers, the entire building/campus are dedicated to a single customer.

Companies like Amazon, Google, Microsoft, Facebook, and OTTs, which have millions and millions of end-users, have infused their services into our day-to-day life to cater to our personal and professional needs. Data centers are the backbone of this digital world.

This is where Hyperscale Data Centers come into play and provide seamless experiences to such massive end-users.

The term Hyperscale means the ability of an infrastructure to scale up when the demand for the service increases. The infrastructure comprises of computers, storage, memory, networks etc. The maintenance of such infrastructure is not an easy task. Constant monitoring of the machines, the server hall temperature and humidity control check and other critical parameters are monitored 24×7 by the Building Management System (BMS).

Data Centers are important because everyone uses data. It is safe to say that perhaps everyone, from individual users like you and me to multinationals, used the services offered by data centers at some point in their lives. Whether you’re sending emails, shopping online, playing video games, or casually browsing social media, every byte of your online storage is stored in your data center. As remote work quickly becomes the new standard, the need for data centers is even greater. The cloud data center is rapidly becoming the preferred mode of data storage for medium and large enterprises. This is because it is much more secure than using traditional hardware devices to store information. Cloud data centers provide a high degree of security protection, such as firewalls and back-up components, in the event of a security breach. The COVID-19 pandemic paved the way for the work-from-home culture, and the global internet traffic increased by 40% in 2020

Also, the rise of new technologies like the Internet of Things (IoT), Artificial Intelligence (AI), Machine Learning (ML), 5G, Augmented Reality (AR), Virtual Reality (VR) and Blockchain caused an explosion of data generation and an increased demand for storage capacities.

Cloud infrastructure has helped businesses and governments with solutions to respond to the pandemic. To cater to such needs, the demand for cloud data center facilities has increased. A heavy infrastructure with a lot of power is needed to cater to such needs.

Data Centers have quite a negative impact on the environment, because of the large consumption of power sources and has 2% of the global contribution of greenhouse gas emissions. To reduce these carbon footprints and work towards a sustainable environment, many data center providers globally have started using power from renewable energy sources like solar and wind energy through Power Purchase Agreements (PPA). The Data Center power consumption can be lowered by regularly updating their systems with new technologies and regular maintenance of the existing infrastructure.

The Indian market will see multifold growth in the Data Center industry due to ease of doing business in the country and thanks to the attractive subsidiaries provided by the state governments, huge investments are committed in the next four years.

Interesting facts about Data Center:

- A large Data Center uses the electricity equivalent to a small Indian town.

- The largest data center in the world is of 10.7 million sq.ft. in China, approximately 1.5 times of the Pentagon building in USA.

- Data Centers will nearly consume 2% of the world’s electricity by 2030. Hence, the Green Data Center initiatives are taken up by various organizations.

The future of training is ‘virtual’

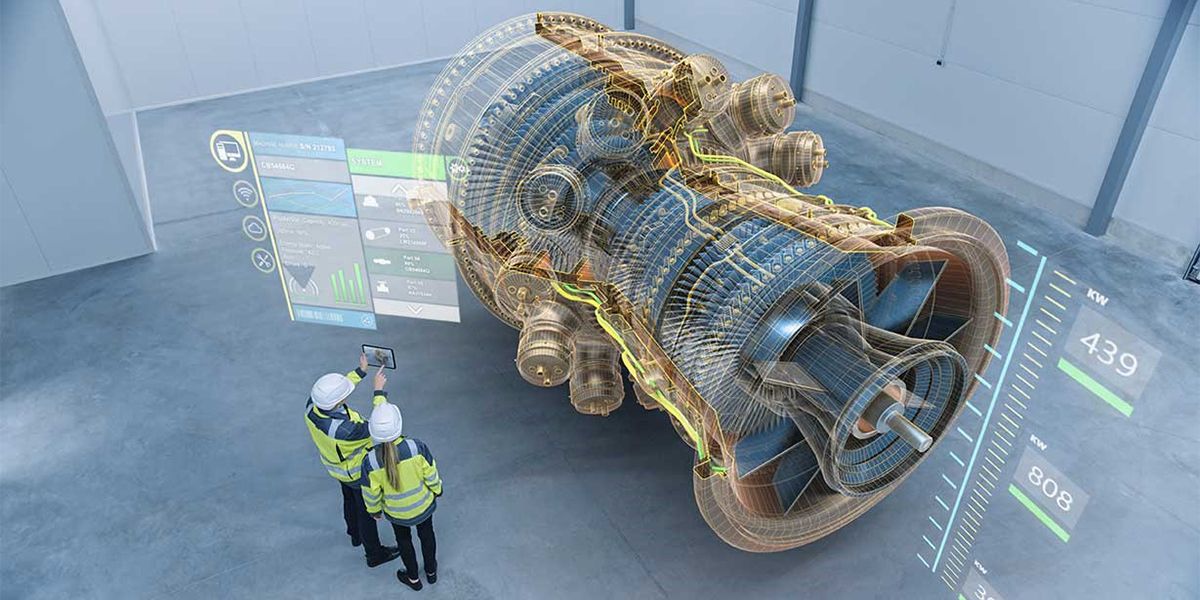

What sounds like the cutting edge of science fiction is no fantasy; it is happening right now as you read this article

Imagine getting trained in a piece of equipment that is part of a critical production pipeline. What if you can get trained while you are in your living room? Sounds fantastic, eh. Well, I am not talking about e-learning or video-based training. Rather what if the machine is virtually in your living room while you walk around it and get familiar with its features? What if you can interact with it and operate it while being immersed in a virtual replica of the entire production facility? Yes, what sounds like the cutting edge of science fiction is no fantasy; it is happening right now as you read this article.

Ever heard of the terms ‘Augmented Reality’ or ‘Virtual Reality’? Welcome to the world of ‘Extended Reality’. What may seem like science fiction is in reality a science fact. Here we will try to explain how these technologies help in transforming the learning experience for you.

Let’s get to the aforementioned example. There was this requirement from a major pharmaceutical company where they wanted to train some of their employees on a machine. Simple, isn’t it? But here’s the catch. That machine was only one of its kind custom-built and that too at a faraway facility. The logistics involved were difficult. What if the operators can be trained remotely? That is when Sify proposed an Augmented Reality (AR) solution. The operators can learn all about the machine including operating it wherever they are. All they needed was an iPad which was a standard device in the company. The machine simply augments on to their real-world environment and the user can walk around it as if the machine were present in the room. They could virtually operate the machine and even make mistakes that do not affect anything in the real world.

What is the point of learning if the company cannot measure the outcome? But with this technology several metrics can be tracked and analysed to provide feedback at the end of the training. So, what was the outcome of the training at the pharma company? The previous hands-on method took close to one year for the new operators to come up to speed of experienced operators. But even then, new operators took 12 minutes to perform the task that experienced operators do in 5 minutes. The gap was a staggering 7 minutes. But using the augmented reality training protocols, all they needed was one afternoon. New operators came to up speed of experienced operators within no time. This means not only can more products reach deserving patients but also significantly reduces a lot of expenditure for the company. And for the user, all they need is a smartphone or a tablet that they already have. This is an amazingly effective training solution. Users can also be trained to dismantle and reassemble complex machines without risking their physical safety.

Not only corporates but even schools can also utilise this technology for effective teaching. Imagine if the student points her tablet on the textbook and voila, the books come alive with 3D models of a volcano erupting, or even make history interesting through visual storytelling.

Now imagine another scenario. A company needs their employees to work at over 100 feet high like on a tower in an oil rig or on a high-tension electricity transmission tower. After months of training and when employees go to the actual work site, some of them realize that they cannot work at the height.

They suffer from acrophobia or a fear of heights. They would not know of this unless they really climb to that height. What if the company could test in advance if the person can work in such a setting?

Enter Virtual Reality (VR). Using a virtual reality headset that the user can strap on to their head, they are immersed in a realistic environment. They look around and all they see is an abyss. They are instructed to perform some of the tasks that they will be doing at the work site. This is a safe way to gauge if the user suffers from acrophobia. Since VR is totally immersive, users will forget that they are safely standing on the floor and might get nervous or fail to do the tasks. This enables the company to identify people who fear heights earlier and assign them to a different task.

Any risky work environment can be virtually re-created for the training. This helps the employees get trained without any harm and it gives them confidence when they go to the actual work location.

VR requires a special headset and controllers for the user to experience it. A lot of different headsets with varying capabilities are already available for the common user. Some of these are not expensive too.

A multitude of metrics can be tracked and stored on xAPI based learning management systems (LMS). Analytics data can be used by the admin or the supervisor to gauge how the employee has fared in the training. That helps them determine the learning outcome and ROI (return on investment) on the training.

Training is changing fast and more effective using these new age technologies. A lot of collaborative learning can happen in the virtual reality space when multiple users can log on to the same training at the same time to learn a task. These immersive methods help the learner retain most of what they learnt when compared to other methods of training.

Well, the future is already here!

How OTT platforms provide seamless content – A Data Center Walkthrough

With the number of options and choices available, it almost seems like there’s no end to what you can and can’t watch on these platforms. It shouldn’t be difficult for a company like Netflix to store such a huge library of shows and movies at HD quality. But the question remains as to how they provide this content to so many people, at the same time, at such a large scale?

The India CTV Report 2021 says around 70% users in the country spend up to four hours watching OTT content. As India is fast gearing up to be one of the largest consumers of OTT content, players like Netflix, PrimeVideo, Zee5 et al are competing to provide relevant and user-centric content using Machine Learning algorithms to suggest what content you may like to watch.

With the number of options and choices available, it almost seems like there’s no end to what you can and can’t watch on these platforms. It shouldn’t be difficult for a company like Netflix to store such a huge library of shows and movies at HD quality. But the question remains as to how they provide this content to so many people, at the same time, at such a large scale?

Here, we attempt to provide an insight into the architecture that goes behind providing such a smooth experience of watching your favourite movie on your phone, tablet, laptop, etc.

Until not too long ago, buffering YouTube videos were a common household problem. Now, bingeing on Netflix shows has become a common household habit. With Data-heavy and media-rich content now being able to be streamed at fast speed speeds at high quality and around the world, forget about buffering, let alone downtime due to server crashes (Ask an IRCTC ticket booker). Let’s see how this has become possible:

Initially, to gain access to an online website, the data from the origin server (which may be in another country) needs to flow through the internet through an incredulously long path to reach your device interface where you can see the website and its content. Due to the extremely long distance and the origin server having to cater to several requests for its content, it would be near impossible to provide content streaming service for consumers around the world from a single server farm location. And server farms are not easy to maintain with the enormous power and cooling requirements for processing and storage of vast amounts of data.

This is where Data Centers around the world have helped OTT players like Netflix provide seamless content to users around the world. Data Centers are secure spaces with controlled environments to host servers that help to store and deliver content to users in and around that region. These media players rent that space on the server rather than going to other countries and building their own and running it, and counter the complexities involved in colocation services.

How Edge Data Centers act as a catalyst

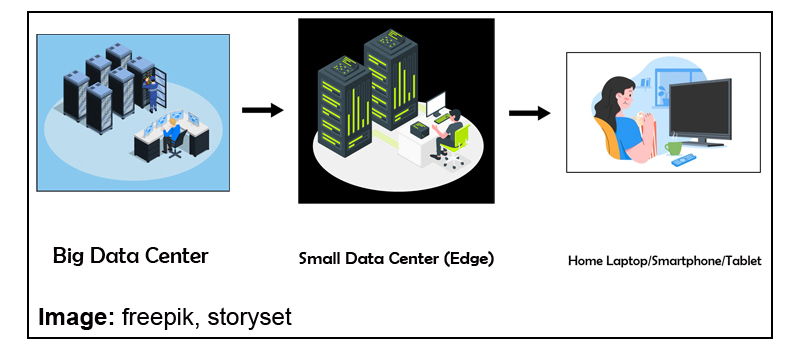

Hosting multiple servers in Data Centers can sometimes be highly expensive and resource-consuming due to multiple server-setups across locations. Moreover, delivering HD quality film content requires a lot of processing and storage. A solution to tackle this problem are Edge Data Centers which are essentially smaller data centers (which could virtually also be a just a regional point of presence [POP] in a network hub maintained by network/internet service providers).

As long as there is a POP to enable smaller storage and compute requirements and interconnected to the data center, the edge data center helps to cache (copy) the content at its location which is closer to the end consumer than a normal Data Center. This results in lesser latency (or time taken to deliver data) and makes the streaming experience fast and effortless.

Role of Content Delivery Networks (CDN)

The edge data center therefore acts as a catalyst to content delivery networks to support streaming without buffering. Content Delivery Networks (CDNs) are specialized networks that support high bandwidth requirements for high-speed data-transfer and processing. Edge Data Centers are an important element of CDNs to ensure you can binge on your favorite OTT series at high speed and high quality.

Although many OTT players like Sony/ Zee opt for a captive Data Center approach due to security reasons, a better alternative would be to colocate (outsource) servers with a service provider and even opt for a cloud service that is agile and scalable for sudden storage and compute requirements. Another reason for colocating with Service providers is the interconnected Data Center network they bring with them. This makes it easier to reach other Edge locations and Data Centers and leverage on an existing network without incurring costs for building a dedicated network.

Demand for OTT services has seen a steady rise and the pandemic, in a way, acted as a catalyst in this drive.

However, OTT platform business models must be mindful of the pitfalls.

Target audience has to be top of the list to build a loyal user base. New content and better UX (User Experience) could keep subscribers, who usually opt out after the free trial, interested.

The infrastructure and development of integral elements of Edge Data Centers are certain to take centerstage to enable content flow more seamlessly in the future that would open the job market to more technical resources, engineers and other professionals.

SAP Security – A Holistic View

With 90%+ of Fortune-500 organizations running SAP to manage their mission-critical business processes and considering the much-enhanced risk of cyber-security breach in today’s volatile and tech-savvy geo-socio-political world, security of your SAP systems deserves much more serious consideration than ever before.

The incidents like hacked websites, successful Denial-of-Service attacks, stolen user data like passwords, bank account number and other sensitive data are on the rise.

Taking a holistic view, this article captures possible ways, remediation to plug in all the possible gaps in various layers. (Right from Operating system level to network level to application level to Cloud and in between). The related SAP products/solutions and the best practices are also addressed in the context of security.

1. Protect your IT environment

Internet Transaction Server (ITS) Security

To make SAP system application available for safe access from a web browser, a middleware component called Internet Transaction Server (ITS) is used. The ITS architecture has many built-in security features.

Network Basics (SAP Router, Firewalls and Network Ports)

The basic security tools that SAP uses are Firewalls, Network Ports, SAP Router. SAP Web dispatcher and SAP Router are examples of application level gateways that can be used for filtering SAP network traffic.

Web-AS (Application Server) Security

SSL (Secure Socket Layer), is a standard security technology for establishing an encrypted link between a server and client. SSL authenticates the communication partners(server & client), by determining the variables of the encryption.

2. Operating System Security hardening for HANA

SAP pays high attention on the security topic. At least as important as the security of the HANA database is the security of the underlying Operating System. Many hacker attacks are targeted on the Operating System and not directly on the database. Once a hacker gained access and sufficient privileges, he can continue to attack the running database application.

Customized operating system security hardening for HANA include:

- Security hardening settings for HANA

- SUSE/RHEL firewall for HANA

- Minimal OS package selection (The fewer OS packages a HANA system has installed, the less possible security holes it might have)

For any server hardening, following procedure is used –

- Benchmark templates used for hardening

- Hardening parameters considered

- Steps followed for hardening

- Post-hardening test by DB/application team

The above procedures should help SAP customers in securing their servers (mostly on HP UNIX, SUSE Linux, RHEL or Wintel) from threats, known/unknown attacks and vulnerabilities. It also adds one more layer of security at the host level.

3. SAP Application (Transaction-level security)

SAP Security has always been a fine balancing act of protecting the SAP data and applications from unauthorized use and access and at the same time, allowing users to do the transactions they’re supposed to. A lot of thinking needs to go in designing the SAP authorization matrix taking into account the principle of segregation of duties. (SoD)

The Business Transaction Analysis (Transaction code STAD) delivers workload statistics across business transactions (that is, a user’s transaction that starts when a transaction is called (/n…) and that ends with an update call or when the user leaves the transaction) and jobs. STAD data can be used to monitor, analyse, audit and maintain the security against unauthorized transaction access.

4. SAP GRC

SAP GRC (Governance, Risk & Compliance) , a key offering from SAP has following sub-modules:

Access control

SAP GRC Access Control application enables reduction of access risk across the enterprise by helping prevent unauthorized access across SAP applications and achieving real-time visibility into access risk.

Process control –

SAP GRC Process Control is an application used to meet production business process and information technology (IT) control monitoring requirements, as well as to serve as an integrated, end-to-end internal control compliance management solution.

Risk Management

- Enterprise-wide risk management framework

- Key risk indicators, automate risk alerts from business applications

5. SAP Audit –

AIS (Audit Information System) –

AIS or Audit Information System is an in-built auditing tool in SAP that you can use to analyse security aspects of your SAP system in detail. AIS is designed for business audits and systems audits. It presents its information in the Audit Info Structure.

Besides this, there can be license audit by SAP and or by the auditing firm of your company (like Deloitte/PwC).

Basic Audit

Here the SAP auditors collaborate strongly with a given license compliance manager who is responsible for ensuring that the audit activities correspond with SAP’s procedure and directives. The number of basic audits undertaken is subject to SAP’s yearly planning, and it is worth noting that not all customers are audited annually.

The auditors perform below tasks (though they will vary a bit from organization to organization & from auditor to auditor):

- Analysis of the system landscape to make sure that all relevant systems (production and development) are measured.

- Technical verification of the USMM log files: correctness of the client, price list selection, user types, dialog users vs. technical users, background jobs, installed components, etc.

- Technical verification of the LAW: users’ combination and their count, etc.

- Analysis of engine measurement – verification of the SAP Notes

- Additional verification of expired users, multiple logons, late logons, workbench development activities, etc.

- Verification of Self Declaration Products, HANA measurement and Business Object.

SAP Enhanced Audit –

Enhanced audit is performed remotely and/or onsite and is addressed to selected customers. Besides the tasks undertaken in ‘Basic Audit’, it additionally covers –

- Checking interactions between SAP and non-SAP systems

- Data flow direction

- Details of how data is transferred between systems/users (EDI, iDoc, etc)

6. Security in SAP S/4 HANA and SAP BW/4 HANA

SAP S/4 HANA & SAP BW/4 HANA use the same security model as traditional ABAP applications. All the earlier explained components/security solutions are fully applicable in S/4 HANA as well as BW/4 HANA.

But these are increased security challenges posed by its component, SAP Fiori, which brings in mobility. But increased mobility means that data can be transferred over a 4G signal, which is not as secure and is easier to hack into. If a device falls into the wrong hands, due to theft or loss, that person could then gain unlawful access to your system. Its remediation is elaborated next.

7. Security in Fiori

While launching SAP Fiori app, the request is sent from the client to the ABAP front-end server by the SAP Fiori Launchpad via Web Dispatcher. ABAP front-end server authenticates the user when this request is sent. To authenticate the user, the ABAP front-end server uses the authentication and single sign-on (SSO) mechanisms provided by SAP NetWeaver.

Securing SAP Fiori system ensures that the information and processes support your business needs, are secured without any unauthorized access to critical information.

The biggest threat for an SAP app is the risk of an employee losing important data of customers. The good thing about mobile SAP is that most mobile devices are enabled with remote wipe capabilities. And many of the CRM- related functions that organizations are looking to use on mobile phones, are cloud-based, which means the confidential data does not reside on the device itself.

SAP Afaria, one of the most popular mobile SAP security providers, is used by many large organizations to enhance the security in Fiori. It helps to connect mobile devices such as smartphones and tablet computers. Afaria can automate electronic file distribution, file and directory management, notifications, and system registry management tasks. Critical security tasks include the regular backing up of data, installing patches and security updates, enforcing security policies and monitoring security violations or threats.

8. SAP Analytical Cloud (SAC)

SAP Analytics Cloud (or SAP Cloud for Analytics) is a software as a service (SaaS) business intelligence (BI) platform designed by SAP. Analytics Cloud is made specifically with the intent of providing all analytics capabilities to all users in one product.

Built natively on SAP HANA Cloud Platform (HCP), SAP Analytics Cloud allows data analysts and business decision makers to visualize, plan and make predictions all from one secure, cloud-based environment. With all the data sources and analytics functions in one product, Analytics Cloud users can work more efficiently. It is seamlessly integrated with Microsoft Office.

SAP Analytical Cloud use the same security model as traditional ABAP applications.

The concept of roles, users, teams, permissions and auditing activities are available to manage security.

9. Identity Management

SAP Identity Management is part of a comprehensive SAP security suite and covers the entire identity lifecycle and automation capabilities based on business processes.

It takes a holistic approach towards managing identities & permissions. It ensures that the right users have the right access to the right systems at the right the time. It thereby enables the efficient, secure and compliant execution of business processes.

10. IAG – (Identity Access Governance) for Cloud Security

SAP Identity Access Governance (IAG) is a multi-tenant solution built on top of SAP Business Technology Platform (BTP) and SAP’s proprietary HANA database. It is SAP’s latest innovation for Access Governance for Cloud.

It provides out of the box integration with SAP’s latest cloud applications such as SAP Ariba, SAP Successfactors, SAP S/4HANA Cloud, SAP Analytics Cloud and other cloud solutions with many more SAP and non-SAP integrations on the roadmap.

11. SAP Data Custodian

To allay the fears of data security in SAP systems hosted on Public Cloud, SAP introduced its latest solution called ‘SAP Data Custodian’. It is an innovative Governance, risk and compliance SaaS solution which can give your organization similar visibility and control of your data in the public cloud that was previously available only on-premise or in a private cloud.

- It allows you localize your data and to restrict access to your cloud resources and SAP applications based on user context including geo-location and citizenship

- Restricts access to your data, including access by employees of the cloud infrastructure provider

- Puts encryption key management control in your hands and provides an additional layer of data protection by segregating your keys from your business data

- Uses tokenization to secure sensitive database fields by replacing sensitive data with format-preserving randomly generated strings of characters or symbols, known as tokens

- With data discovery you can scan for sensitive data categories such as SSN / SIN, national ID number, passport number, IBAN, credit card, email address, ethnicity, et cetera, based on pattern determination and machine learning

12. Futuristic approach towards securing ERP systems

Driven by the digital transformation of businesses and its demand for flexible computing resources, the cloud has become the prevalent deployment model for enterprise services and applications introducing complex stakeholder relations and extended attack surfaces.

Mobility (access from smart phones/tablets) & IOT (Internet of things) brought in new challenges of scale (“billions of devises”) and required to cope with their limited computational and storage capabilities asking for the design of specific light-weight security protocols. Sensor integration offered new opportunities for application scenarios, for instance, in distributed supply chains.

Increased capabilities of sensors and gateways now allow to move business logic to the edge, removing the backend bottleneck for performance.

SAP has been investing a lot in drawing, refining its roadmap for security for the future.

It used McKinsey’s 7S strategy concept to review SAP Security Research and adapt supporting factors. Secondly, it assessed technology trends provided by Gartner, Forrester, IDC and others to look into probable security challenges.

As per SAP’s research, today’s big challenges in cybersecurity emanate around ML (Machine Learning). The trend is ML anywhere! ML itself provides a new attack vector which needs to be secured. In addition, ML is used by attackers and so needs to be used by us to better defend our solutions.

Machine Learning that has the most significant impact on the security and privacy roadmap these days, both providing the power of data to design novel security mechanisms as well as requiring new security and privacy paradigms to counter Machine Learning specific threats.

Deceptive applications is another trend SAP foresees. Applications must be enabled to identify attackers and defend themselves.

Thirdly, still underestimated, SAP foresees the attacks via Open Source or Third-party software. SAP has been adapting its strategy accordingly to tackle those new trends.

Wishing all SAP Customers a Happy, Safe and Compliant SAP experience!

SAP Migrations to AWS Cloud using Cloud Endure Migration Tool

Enterprises migrating SAP workloads to AWS are looking for an as-is migration solution that are readily available. Earlier, enterprises used the traditional method of SAP backup and restore for migration or AWS-native tools such as AWS Server Migration Service to perform this type of migration. CloudEndure Migration is a new AWS-native migration tool for SAP customers.

Enterprises looking to host a large number of SAP systems onto AWS can use CloudEndure Migration without worrying about compatibility, performance disruption or long cutover windows. You can perform any re-architecture after your systems start running on AWS.

Solution Overview

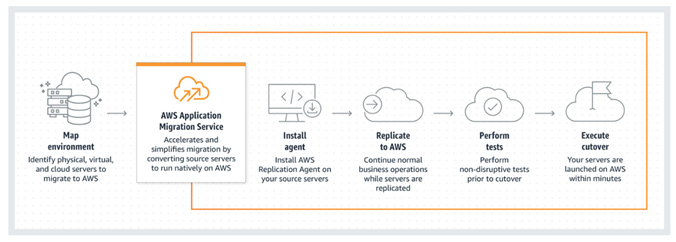

CloudEndure Migration simplifies, expedites and reduces the cost of such migrations by offering a highly automated as-is migration solution. This blog demonstrates how easy it is to set up CloudEndure Migration and the steps involved in migrating SAP systems from source to AWS environment.

CloudEndure Migration Architecture

The following diagram shows the CloudEndure Migration architecture for migrating SAP systems.

Major steps for this migration are as below:

- Agent Installation

- Continuous Replication

- Testing and Cutover

CloudEndure helps you overcome the following migration challenges effectively:

- Diverse infrastructure and OS type

- Legacy application

- Complex database

- Busy continuously changing workloads

- Machine compatibility issues

- Expensive cloud skills required

- Downtime and performance disruptions

- Tight project timelines and limited budget

Use Cases

The most common uses cases for CloudEndure Migration are:

- Lift and Shift, then optimize

- Vast majority of Windows/Linux servers when agent can be installed on source machine

- Replicating Block Storage devices like SAN, iSCSI, Physical, EBS, VMDK, VHD

- Replicating full machine/volume

Benefits

Access to advanced technology

Simplify operations and get better insights with AWS Application Migration Services integration with AWS Identity and Access Management (IAM), Amazon CloudWatch, AWS CloudTrail, and other AWS Services.

Minimal downtime during migration

With AWS Application Migration Service, you can maintain normal business operations throughout the replication process. It continuously replicates source servers, which means little to no performance impact. Continuous replication also makes it easy to conduct non-disruptive tests and shortens cutover windows.

Reduced costs

AWS Application Migration Service reduces overall migration costs as there is no need to invest in multiple migration solutions, specialized cloud development, or application-specific skills. This is because it can be used to lift and shift any application from any source infrastructure that runs supported operating systems (OS).

Conclusion

This blog discussed how Sify can help its SAP customers migrating to AWS Cloud using CloudEndure tool. Sify would be glad to help your organization as your AWS Managed Services Partner for a tailor-made solution involving seamless cloud migration experience and for your Cloud Infrastructure management thereafter.

CloudEndure Migration software doesn’t charge anything as a license fee to perform automated migration to AWS. Each free CloudEndure Migration license allows for 90 days of use following agent installation. During this period, you can start the replication of your source machines, launch target machines, conduct unlimited tests, and perform a scheduled cutover to complete your migration.

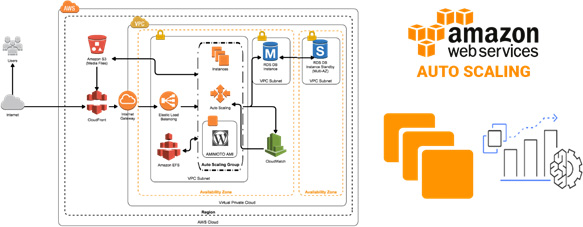

Automated Recovery of SAP HANA Database using AWS native options

This blog exclusively covers the options available in AWS to recover SAP HANA Database with low cost and without using native HSR tool of SAP. With a focus on low costs, Sify recommends choosing a cloud native solution leveraging EC2 Auto Scaling and AWS EBS snapshots that are not feasible in an on-premises setup.

Solution Overview

The Restore process leveraging Auto Scaling and EBS snapshots works across availability zones in a region. Snapshots provide a fast backup process, independent of the database size. They are stored in Amazon S3 and replicated across Availability Zones automatically, meaning we can create a new volume out of a snapshot in another Availability Zone. In addition, Amazon EBS snapshots are incremental by default and only the delta changes are stored since the last snapshot. To create a resilient highly available architecture, automation is key. All steps to recover the database must be automated in case something fails or goes wrong.

Autoscaling Architecture

The following diagram shows the Auto Scaling architecture for systems in AWS.

Use Case

EBS Snapshots

Prior to enabling EBS snapshots we must ensure the destination of log backups are written to an EFS folder which is available across Availability Zones.

Create a script prior to the command to be executed for an EBS snapshot. By using the system username and password, we make an entry into the HANA backup catalog to ensure that the database is aware of the snapshot backup. We use the snapshot feature to take a point in time and crash consistent snapshot across multiple EBS volumes without a manual I/O freeze. It is recommended to take the snapshot every 8-12 hours.

Now the log backups are stored in EFS and full backups as EBS snapshots in S3 and both sets are available across AZs. Both storage locations can be accessed across AZs in a region and are independent of an AZ.

EC2 Auto Scaling

Next, we create an Auto Scaling group with a minimum and maximum of one instance. In case of an issue with the instance, the Auto Scaling group will create an alternative instance out of the same AMI as the original instance.

We first create a golden AMI for the Auto Scaling group and the AMI is used in a launch configuration with the desired instance type. With a shell script in the user data, upon launch of the instance, new volumes are created out of the latest EBS snapshot and attached to the instance. We can use the EBS fast snapshot restore feature to reduce the initialization time of the newly created volumes.

If the database is started now (recently), it would have a crash consistent state. In order to restore it to the latest state, we can leverage the log backup stored in EFS which is automatically mounted by the AMI. Additional Care to be taken so that the SAP application server is aware of the new IP of the restored SAP HANA database server.

Benefits

- Better fault tolerance – Amazon EC2 Auto Scaling can detect when an instance is unhealthy, terminate it, and launch an instance to replace it. Amazon EC2 Auto Scaling can also be configured to use multiple Availability Zones. If one Availability Zone becomes unavailable, Amazon EC2 Auto Scaling can launch instances in another one to compensate.

- Better availability – Amazon EC2 Auto Scaling helps in ensuring the application always has the right capacity to handle the current traffic demand.

- Better cost management – Amazon EC2 Auto Scaling can dynamically increase and decrease capacity, as needed. Since AWS has pay for the EC2 instances, billing model can be used to save money, by launching instances only when they are needed and terminating them when they aren’t.

Conclusion

This blog discussed how Sify can help SAP customers to save cost by automating recovery process for HANA database, using native AWS tools. Sify would be glad to help your organization as your AWS Managed Services Partner for a tailor-made SAP on AWS Cloud solution involving seamless cloud migration experience and for your Cloud Infrastructure management thereafter.